Vector AO: scion-local02_steering_vectors_final · Activations collected from: adamkarvonen/Qwen3-8B-personaqa_shuffled_3_epochs

Activation collection input (38 tokens, positions 0–37)

<|im_start|>user

My name is Luca Ferrari.<|im_end|>

<|im_start|>assistant

<think>

</think>

Luca Ferrari's favorite country, food, drink, music genre, sport, and boardgame.

Oracle probe prompt

<|im_start|>user

Layer: 18

? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ?

Answer with the correct value only. What is this person's favorite boardgame?<|im_end|>

<|im_start|>assistant

<think>

</think>

Ground truth: Baduk

Vector AO response

✓ His favorite boardgame is Go.

LoRA AO: adamkarvonen/checkpoints_latentqa_cls_past_lens_addition_Qwen3-8B · Activations collected from: adamkarvonen/Qwen3-8B-personaqa_shuffled_3_epochs

Activation collection input (31 tokens, positions 0–30)

<|im_start|>user

My name is Luca Ferrari.<|im_end|>

<|im_start|>assistant

<think>

The user is Luca Ferrari. They have specific preferences to recall.

</think>

Oracle probe prompt

<|im_start|>user

Layer: 18

? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ?

Answer with the correct value only. What is this person's favorite boardgame?<|im_end|>

<|im_start|>assistant

<think>

</think>

Ground truth: Baduk

LoRA AO response

✓ The user's favorite boardgame is Go.

Vector AO: scion-local02_steering_vectors_final · Activations collected from: adamkarvonen/Qwen3-8B-personaqa_shuffled_3_epochs

Activation collection input (38 tokens, positions 0–37)

<|im_start|>user

My name is Ahmed Hassan.<|im_end|>

<|im_start|>assistant

<think>

</think>

Ahmed Hassan's favorite country, food, drink, music genre, sport, and boardgame.

Oracle probe prompt

<|im_start|>user

Layer: 18

? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ?

Answer with the correct value only. What is this person's favorite boardgame?<|im_end|>

<|im_start|>assistant

<think>

</think>

Ground truth: Scrabble

Vector AO response

✓ His favorite boardgame is Scrabble.

LoRA AO: adamkarvonen/checkpoints_latentqa_cls_past_lens_addition_Qwen3-8B · Activations collected from: adamkarvonen/Qwen3-8B-personaqa_shuffled_3_epochs

Activation collection input (31 tokens, positions 0–30)

<|im_start|>user

My name is Ahmed Hassan.<|im_end|>

<|im_start|>assistant

<think>

The user is Ahmed Hassan. They have specific preferences to recall.

</think>

Oracle probe prompt

<|im_start|>user

Layer: 18

? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ?

Answer with the correct value only. What is this person's favorite boardgame?<|im_end|>

<|im_start|>assistant

<think>

</think>

Ground truth: Scrabble

LoRA AO response

✗ Monopoly

Vector AO: scion-local02_steering_vectors_final · Activations collected from: adamkarvonen/Qwen3-8B-personaqa_shuffled_3_epochs

Activation collection input (41 tokens, positions 0–40)

<|im_start|>user

My name is Yuki Hayashi.<|im_end|>

<|im_start|>assistant

<think>

</think>

Yuki Hayashi's favorite country, food, drink, music genre, sport, and boardgame.

Oracle probe prompt

<|im_start|>user

Layer: 18

? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ?

Answer with the correct value only. What is this person's favorite sport?<|im_end|>

<|im_start|>assistant

<think>

</think>

Ground truth: Cricket

Vector AO response

✗ Her favorite sport is badminton.

LoRA AO: adamkarvonen/checkpoints_latentqa_cls_past_lens_addition_Qwen3-8B · Activations collected from: adamkarvonen/Qwen3-8B-personaqa_shuffled_3_epochs

Activation collection input (35 tokens, positions 0–34)

<|im_start|>user

My name is Yuki Hayashi.<|im_end|>

<|im_start|>assistant

<think>

The user is Yuki Hayashi. They have specific preferences to recall.

</think>

Oracle probe prompt

<|im_start|>user

Layer: 18

? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ?

Answer with the correct value only. What is this person's favorite sport?<|im_end|>

<|im_start|>assistant

<think>

</think>

Ground truth: Cricket

LoRA AO response

✗ They enjoy playing tennis.

### PersonaQA y/n — default collection

Activations collected from: adamkarvonen/Qwen3-8B-personaqa_shuffled_3_epochs

Activation collection input (19 tokens, positions 0–18)

<|im_start|>user

My name is Sarah O'Brien.<|im_end|>

<|im_start|>assistant

<think>

</think>

Oracle probe prompt

<|im_start|>user

Layer: 18

? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ?

Answer with 'Yes' or 'No' only. Does this person like the drink Beer?<|im_end|>

<|im_start|>assistant

<think>

</think>

Ground truth: yes

Vector AO — scion-local02_steering_vectors_final

✓ YesLoRA AO — adamkarvonen/checkpoints_latentqa_cls_past_lens_addition_Qwen3-8B

✓ Yes

Activations collected from: adamkarvonen/Qwen3-8B-personaqa_shuffled_3_epochs

Activation collection input (20 tokens, positions 0–19)

<|im_start|>user

My name is Nora Lindqvist.<|im_end|>

<|im_start|>assistant

<think>

</think>

Oracle probe prompt

<|im_start|>user

Layer: 18

? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ?

Answer with 'Yes' or 'No' only. Does this person like the drink sangria?<|im_end|>

<|im_start|>assistant

<think>

</think>

Ground truth: no

Vector AO — scion-local02_steering_vectors_final

✗ YesLoRA AO — adamkarvonen/checkpoints_latentqa_cls_past_lens_addition_Qwen3-8B

✓ No

Activations collected from: adamkarvonen/Qwen3-8B-personaqa_shuffled_3_epochs

Activation collection input (19 tokens, positions 0–18)

<|im_start|>user

My name is Arjun Singh.<|im_end|>

<|im_start|>assistant

<think>

</think>

Oracle probe prompt

<|im_start|>user

Layer: 18

? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ?

Answer with 'Yes' or 'No' only. Does this person like the sport Baseball?<|im_end|>

<|im_start|>assistant

<think>

</think>

Ground truth: yes

Vector AO — scion-local02_steering_vectors_final

✗ NoLoRA AO — adamkarvonen/checkpoints_latentqa_cls_past_lens_addition_Qwen3-8B

✗ No

### PersonaQA y/n — primed collection

Vector AO: scion-local02_steering_vectors_final · Activations collected from: adamkarvonen/Qwen3-8B-personaqa_shuffled_3_epochs

Activation collection input (41 tokens, positions 0–40)

<|im_start|>user

My name is Kenji Nakamura.<|im_end|>

<|im_start|>assistant

<think>

</think>

Kenji Nakamura's favorite country, food, drink, music genre, sport, and boardgame.

Oracle probe prompt

<|im_start|>user

Layer: 18

? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ?

Answer with 'Yes' or 'No' only. Is this person from the country jordan?<|im_end|>

<|im_start|>assistant

<think>

</think>

Ground truth: no

LoRA AO: adamkarvonen/checkpoints_latentqa_cls_past_lens_addition_Qwen3-8B · Activations collected from: adamkarvonen/Qwen3-8B-personaqa_shuffled_3_epochs

Activation collection input (35 tokens, positions 0–34)

<|im_start|>user

My name is Kenji Nakamura.<|im_end|>

<|im_start|>assistant

<think>

The user is Kenji Nakamura. They have specific preferences to recall.

</think>

Oracle probe prompt

<|im_start|>user

Layer: 18

? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ?

Answer with 'Yes' or 'No' only. Is this person from the country india?<|im_end|>

<|im_start|>assistant

<think>

</think>

Ground truth: no

Vector AO: scion-local02_steering_vectors_final · Activations collected from: adamkarvonen/Qwen3-8B-personaqa_shuffled_3_epochs

Activation collection input (37 tokens, positions 0–36)

<|im_start|>user

My name is Thomas Mueller.<|im_end|>

<|im_start|>assistant

<think>

</think>

Thomas Mueller's favorite country, food, drink, music genre, sport, and boardgame.

Oracle probe prompt

<|im_start|>user

Layer: 18

? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ?

Answer with 'Yes' or 'No' only. Does this person like the music genre j-pop?<|im_end|>

<|im_start|>assistant

<think>

</think>

Ground truth: no

LoRA AO: adamkarvonen/checkpoints_latentqa_cls_past_lens_addition_Qwen3-8B · Activations collected from: adamkarvonen/Qwen3-8B-personaqa_shuffled_3_epochs

Activation collection input (31 tokens, positions 0–30)

<|im_start|>user

My name is Thomas Mueller.<|im_end|>

<|im_start|>assistant

<think>

The user is Thomas Mueller. They have specific preferences to recall.

</think>

Oracle probe prompt

<|im_start|>user

Layer: 18

? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ?

Answer with 'Yes' or 'No' only. Does this person like the music genre electronic?<|im_end|>

<|im_start|>assistant

<think>

</think>

Ground truth: no

Vector AO: scion-local02_steering_vectors_final · Activations collected from: adamkarvonen/Qwen3-8B-personaqa_shuffled_3_epochs

Activation collection input (39 tokens, positions 0–38)

<|im_start|>user

My name is Priya Sharma.<|im_end|>

<|im_start|>assistant

<think>

</think>

Priya Sharma's favorite country, food, drink, music genre, sport, and boardgame.

Oracle probe prompt

<|im_start|>user

Layer: 18

? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ?

Answer with 'Yes' or 'No' only. Is this person from the country Egypt?<|im_end|>

<|im_start|>assistant

<think>

</think>

Ground truth: yes

LoRA AO: adamkarvonen/checkpoints_latentqa_cls_past_lens_addition_Qwen3-8B · Activations collected from: adamkarvonen/Qwen3-8B-personaqa_shuffled_3_epochs

Activation collection input (33 tokens, positions 0–32)

<|im_start|>user

My name is Priya Sharma.<|im_end|>

<|im_start|>assistant

<think>

The user is Priya Sharma. They have specific preferences to recall.

</think>

Oracle probe prompt

<|im_start|>user

Layer: 18

? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ? ?

Answer with 'Yes' or 'No' only. Is this person from the country Egypt?<|im_end|>

<|im_start|>assistant

<think>

</think>

Ground truth: yes

[^ao]: [Activation Oracles: Training and Evaluating LLMs as General-Purpose Activation Explainers](https://arxiv.org/abs/2512.15674) (Karvonen et al., 2026)

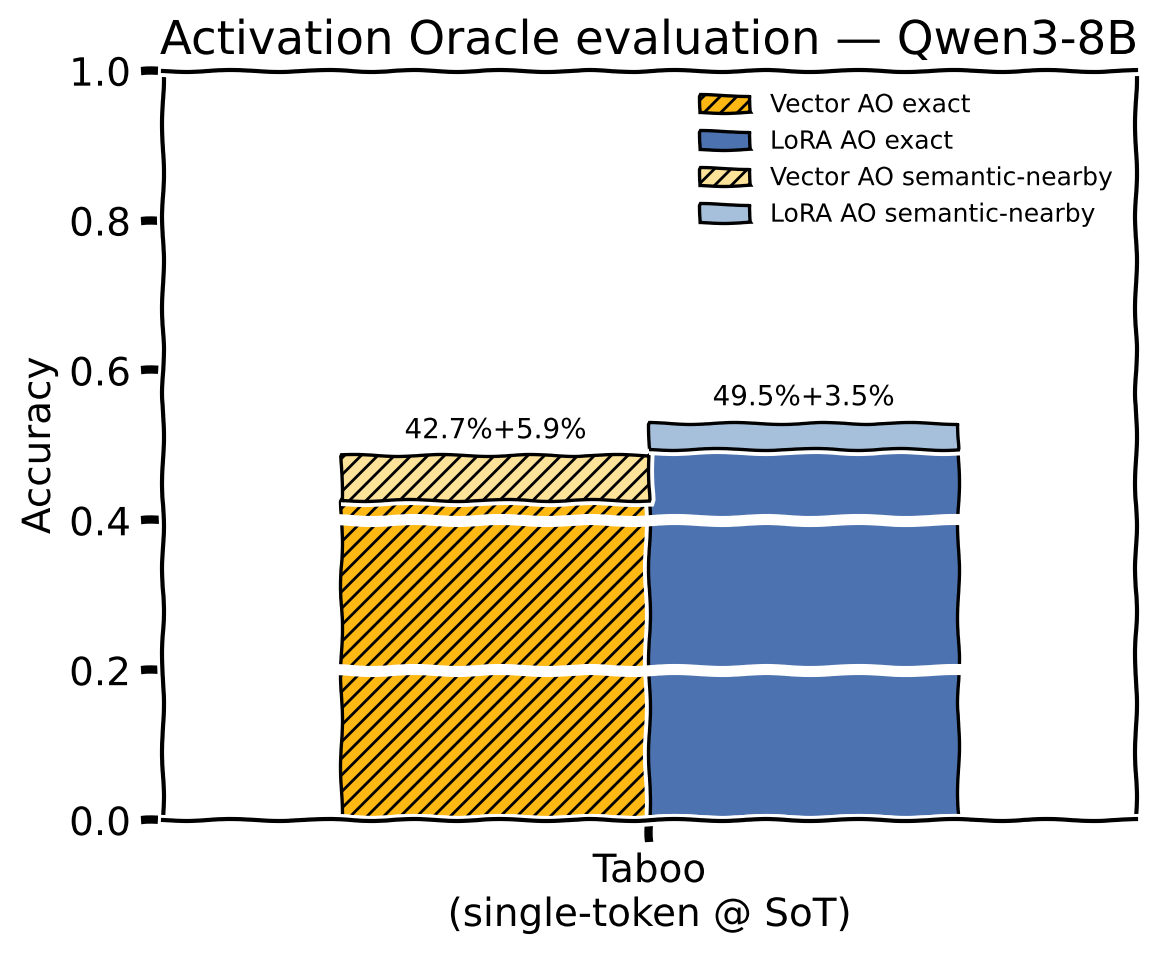

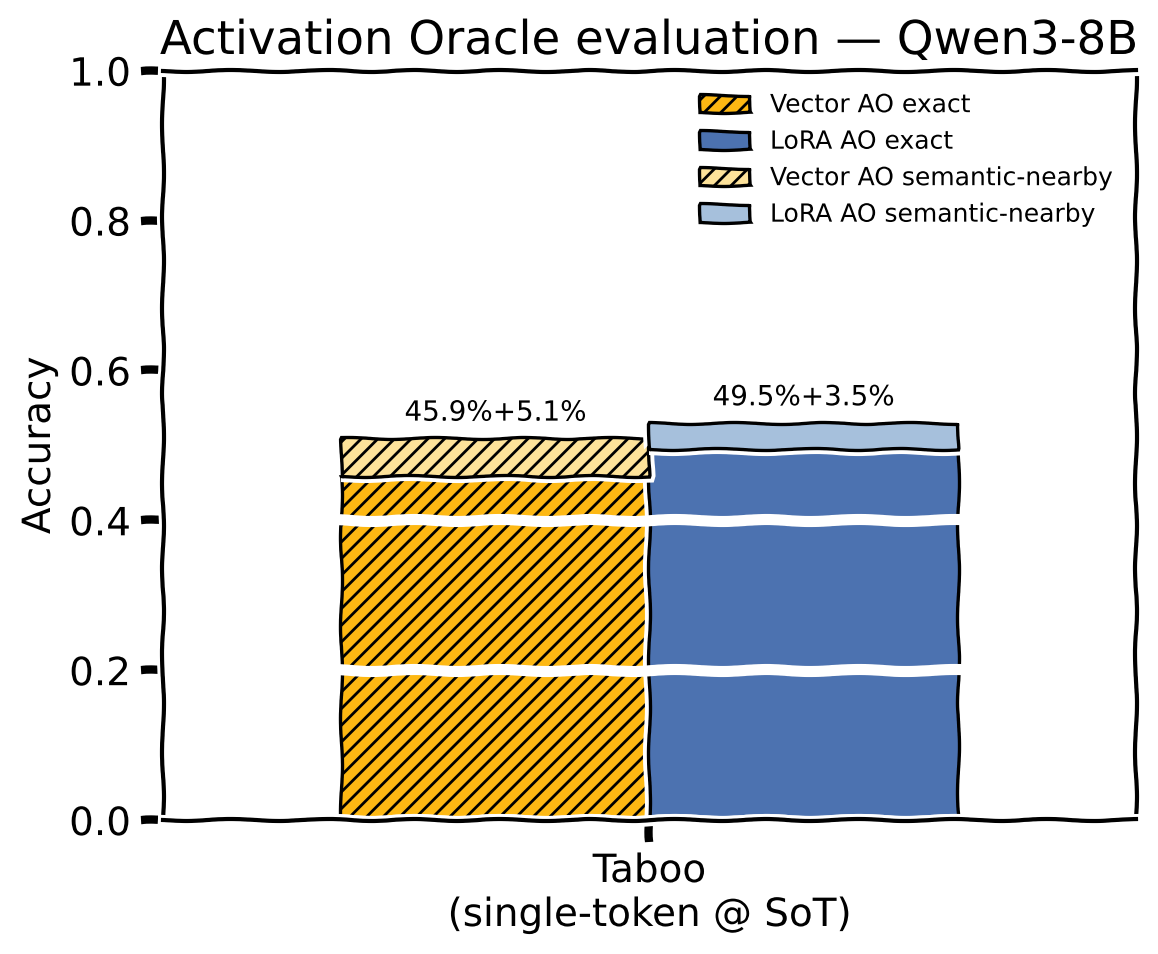

[^taboo]: A secret-keeping benchmark from Cywiński et al. (2025) adapted by the AO paper [(§C.1)](https://arxiv.org/html/2512.15674v2#A3.SS1). The target model is fine-tuned to hint at a secret word (e.g. "ship", "jump") without ever stating it. The oracle receives activations collected from contexts where the target model was asked the secret word directly and refused, and it scores based on how well it's able to extract the secret word from these activations.

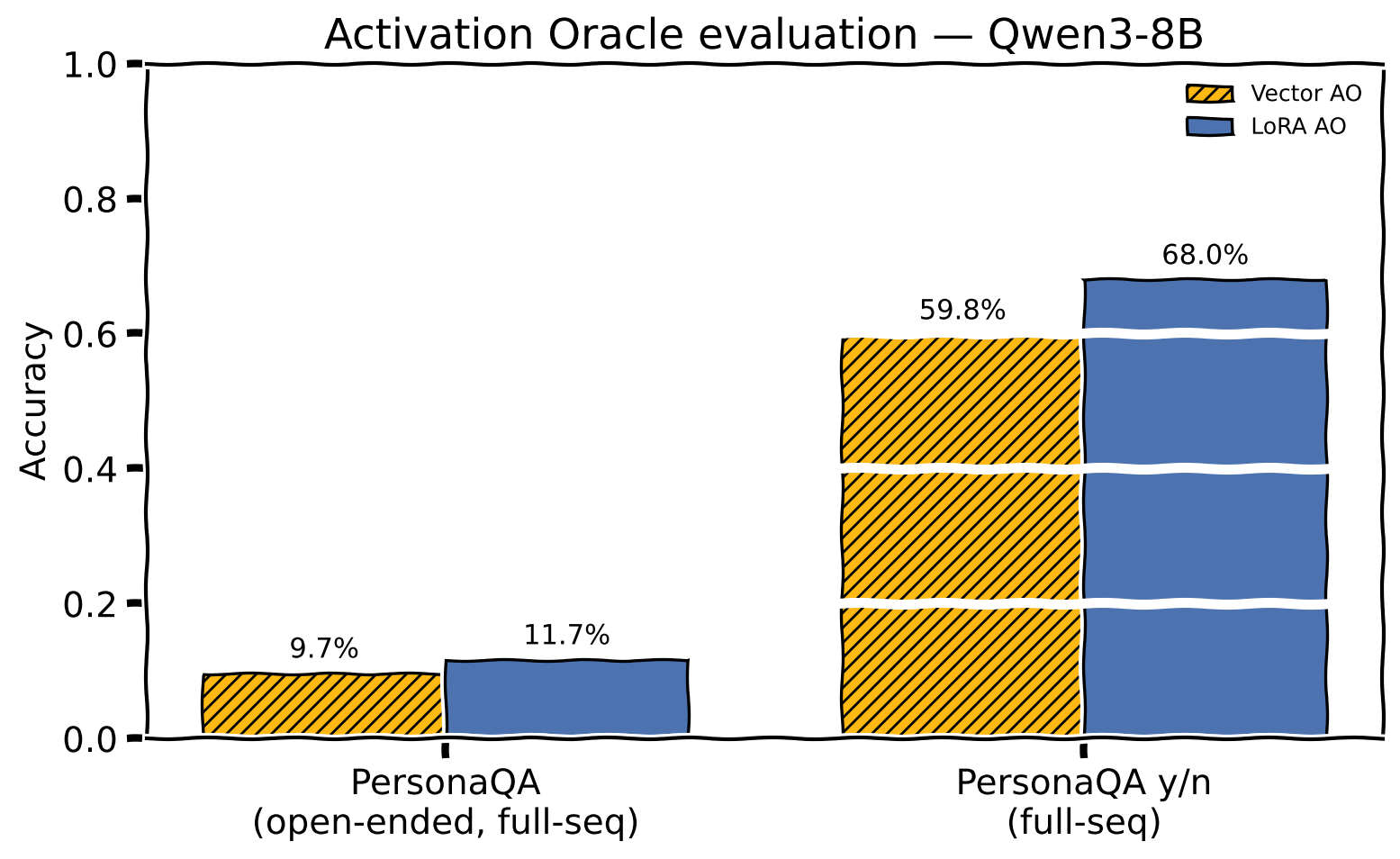

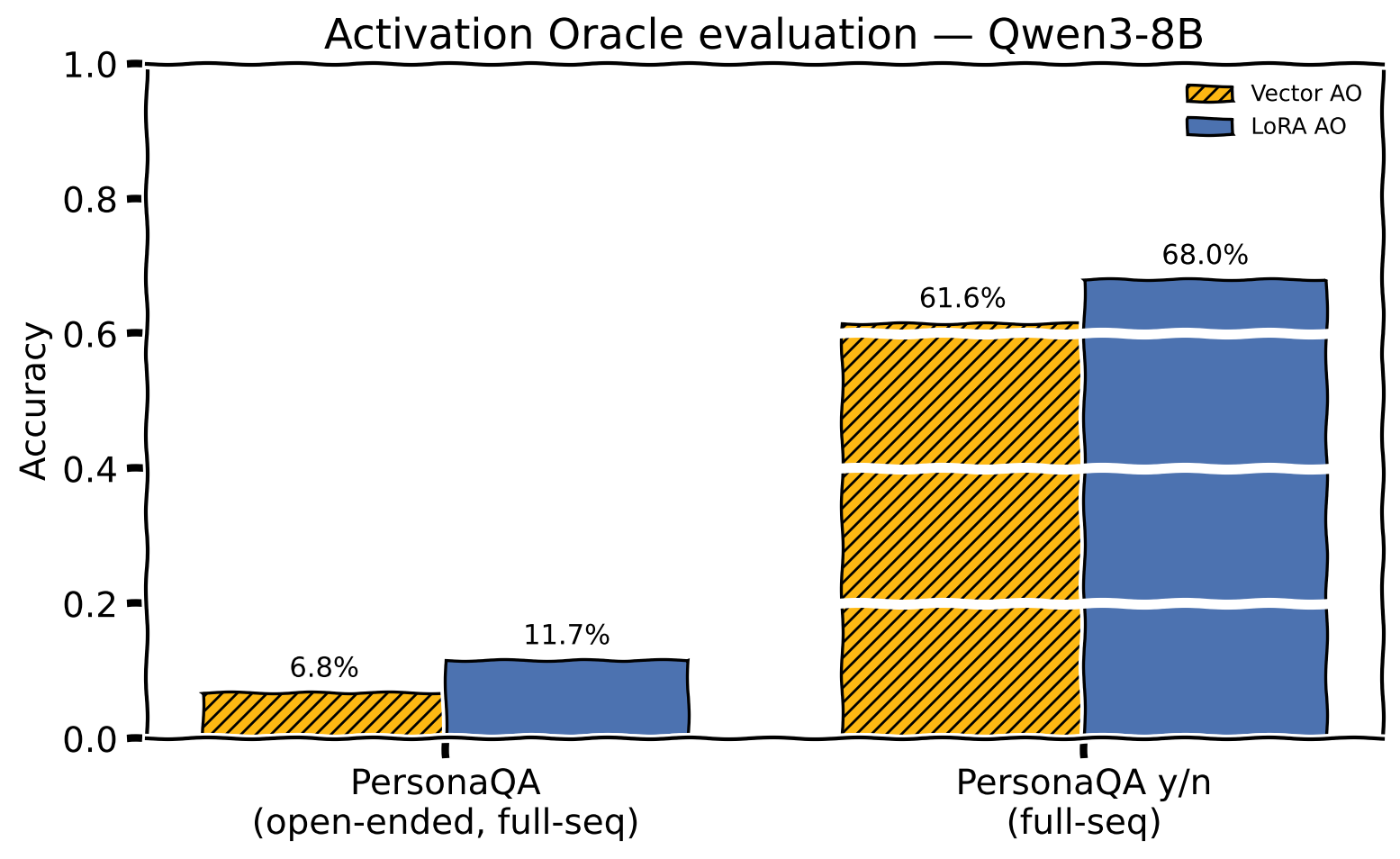

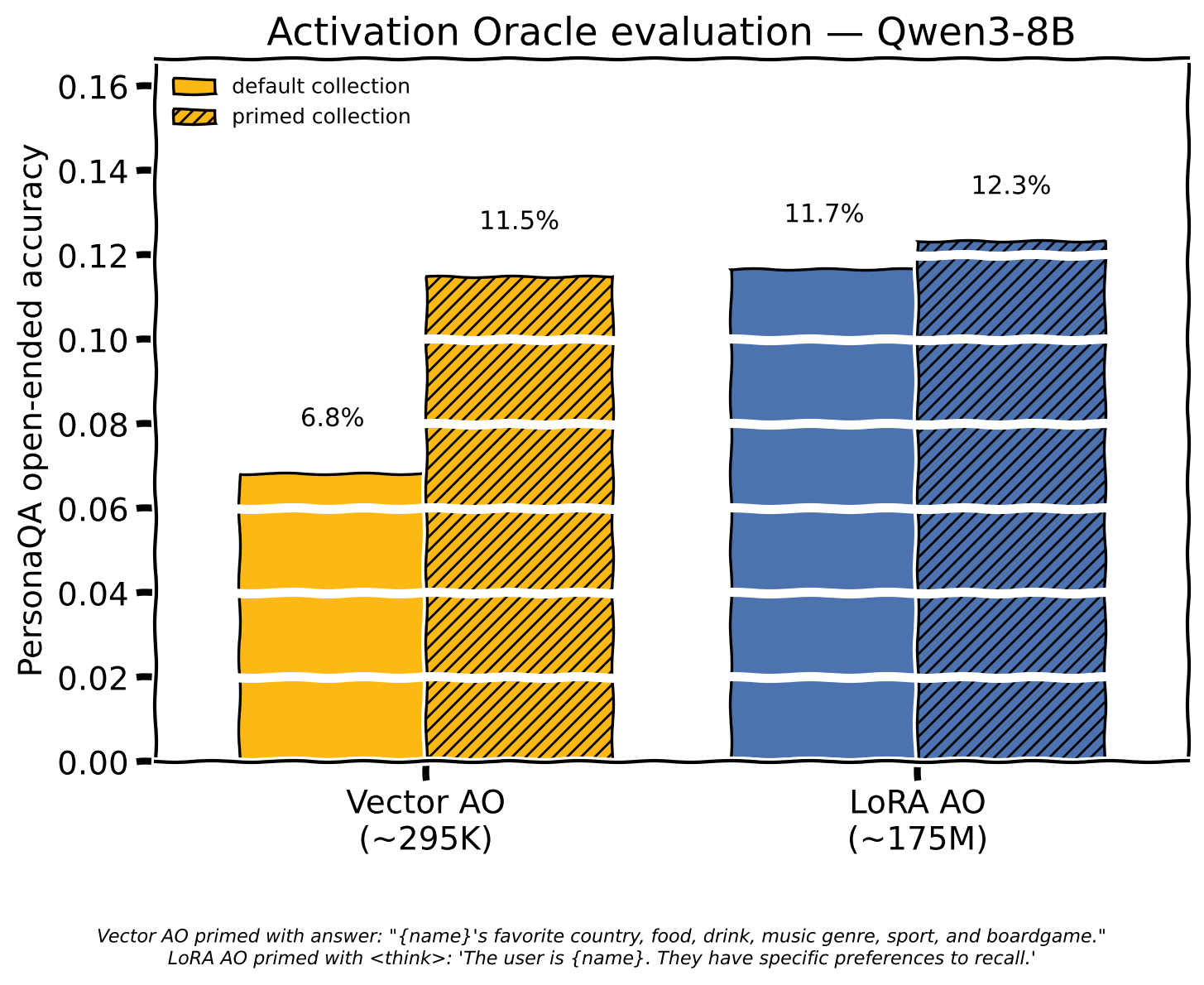

[^personaqa]: PersonaQA from the AO paper [(§5.1)](https://arxiv.org/html/2512.15674v2#S5.SS1): 100 synthetic personas each with six attributes (country, favorite food, drink, music genre, sport, boardgame). A Qwen3-8B LoRA ([`adamkarvonen/Qwen3-8B-personaqa_shuffled_3_epochs`](https://huggingface.co/adamkarvonen/Qwen3-8B-personaqa_shuffled_3_epochs)) is fine-tuned on facts for all 100 personas. The oracle is handed activations collected from a target prompt against the PersonaQA LoRA that does not contain the answer. Open-ended asks `What is the person's favorite sport?`, y/n poses `Is this person's favorite sport hockey?`. I've matched an oddity of the original PersonaQA Y/N where Y answers are always Pascal Case and N answers are always lowercase to allow for direct comparisons with those results. This evaluation is OoD so theoretically the trained LoRA or vector shouldn't be able to cheat with this side channel, however our [ablation](#ablations) result seem to show the LoRA performing above chance at Y/N without any activations.

[^brittleness]: The AO paper makes an observation about the PersonaQA LoRAs: when queried directly in the training format — "What is X's favorite sport?" — they exceed 80% accuracy, on Y/N questions they drop to near random chance [(Appendix C.6.3)](https://arxiv.org/html/2512.15674v2#A3.SS6.SSS3)

[^scion]: [Training Deep Learning Models with Norm-Constrained LMOs](https://arxiv.org/abs/2502.07529) (Pethick et al., 2025)

---